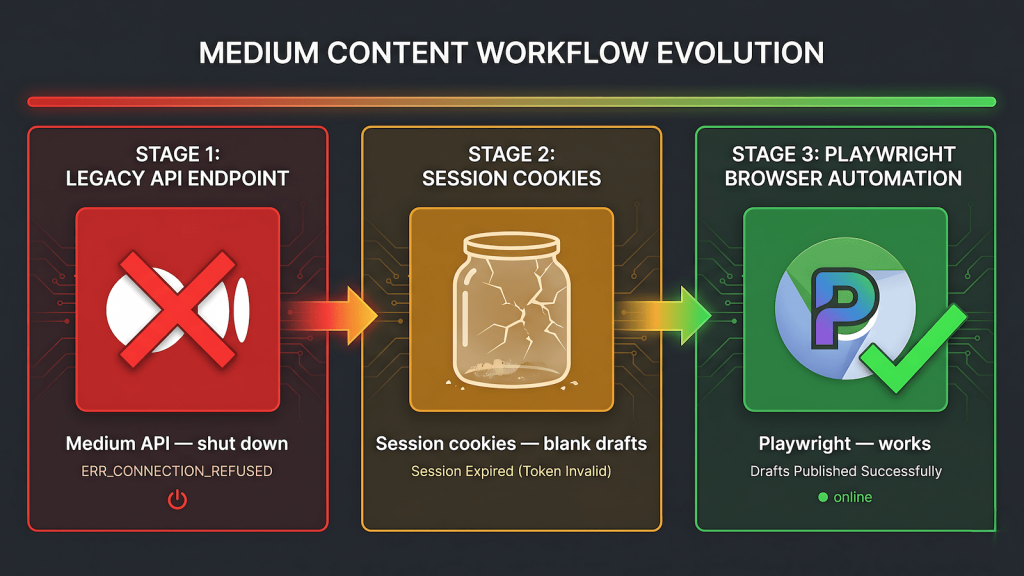

Medium used to have a public API. It had endpoints, documentation, and for a period it was a legitimate path for programmatic publishing. That access is gone. Medium shut down API token generation and the publishing endpoints are no longer available to external integrations. There is no waitlist, no application process, and no workaround at the API layer. If you are building a syndication pipeline and Medium is on your target list, the medium api alternative automation question is not how to fix the API — it is what replaces it entirely.

This post documents the full sequence of what we tried while building EchoCast, the syndication layer that runs on top of our n8n and Playwright stack. The goal was straightforward: publish a canonical-linked version of a WordPress post to Medium without manual work. What followed was a progression through three approaches before landing on one that holds up in production.

The API Is Gone, Not Broken

The distinction matters. A broken API suggests a temporary state that might get fixed. Medium’s API access being shut down is a policy decision. Token generation is not available for new integrations. Existing tokens that predate the shutdown may still function for some accounts, but for any new build starting from zero the API is not a viable path. We confirmed this at the start and did not spend time trying to revive it.

The Cookie Session Approach

With the API off the table, the first real attempt was session-based automation through n8n HTTP nodes. Medium runs on standard web sessions. Capturing valid session cookies from a logged-in browser and attaching them to HTTP requests gives you access to Medium’s internal endpoints as an authenticated user. We captured the full session using DevTools to look for sid, uid, and xsrf cookies and started mapping what was reachable.

This went significantly deeper than a quick test. HAR capture on the manual import flow exposed Medium’s internal GraphQL operations. We identified SubmitPublishPostMutation with the setPostPublished operation as the publish trigger. We found the new-story?logLockId=22 endpoint that creates a draft shell. We traced the _/upload-url call that uploads the featured image to Medium’s CDN before story creation. We matched the logLockId consistency that ties the import session together across calls. We chased cf_clearance cookie expiry as a candidate cause when results became inconsistent.

The import created draft shells successfully across multiple runs. Multiple untitled stories appeared in the Medium account with the correct post IDs. Zero words in each one. The content scraper never fired.

The manual import flow through medium.com/p/import-story triggers a server-side scrape that our direct new-story call did not trigger. The difference happens on Medium’s backend. Every payload we sent was structurally identical to what the manual import sent. The logLockId matched. The cookies were fresh. The scraper still did not fire. At that point we had mapped Medium’s internal API more thoroughly than most people ever will, and the conclusion was unavoidable: the content population step is intentionally gated server-side in a way that cannot be replicated through HTTP requests alone.

The Burp Suite Idea

Before moving to full browser automation, one alternative came up: use Burp Suite to intercept a successful manual import, capture the complete request sequence including whatever session state triggers the scraper, and replay it via n8n. The instinct was reasonable. Burp Suite is the correct tool for capturing and analyzing authenticated request flows, and the QA background made it a natural reach. We had already used it during the HAR analysis phase.

The structural problem is that replaying intercepted requests is session replay, not automation. The cf_clearance and XSRF tokens are bound to a specific browser session and expire. Medium’s behavioral detection flags request patterns that do not originate from a real browser context. Even if a single replay produced content, maintaining it as a daily production pipeline would require continuously re-capturing fresh session state which is more manual overhead than the original copy-paste problem it was meant to solve. Burp Suite is the right tool for security testing and API research. It is not the right tool for sustained unattended publishing automation.

The Playwright Approach

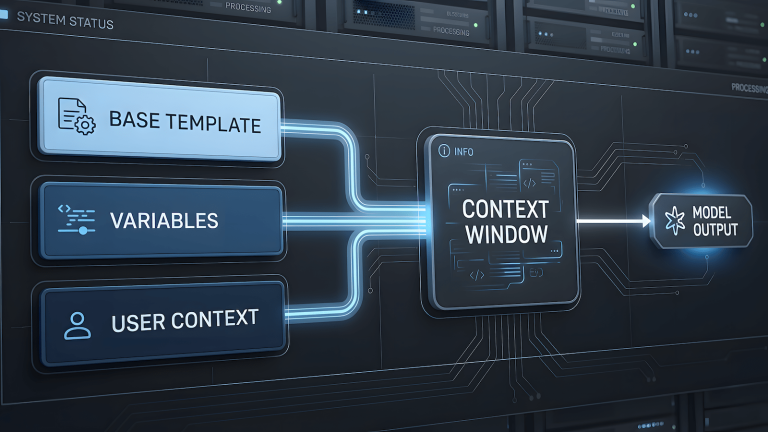

The solution that held was running Chromium through Playwright with a persistent authentication context. Instead of reverse-engineering Medium’s session management or replaying captured requests, we let a real browser handle everything. Playwright launches Chromium with a complete browser fingerprint such as proper user agent, standard headers, JavaScript execution, full cookie handling and navigates to Medium the way a human would. The auth context is saved to disk after the first manual login and reloaded on every subsequent run.

The posting flow navigates to the Medium new story editor, populates the title and body fields using Playwright’s locator API, and submits. Because a real browser is interacting with the real editor, the content scraper fires the same way it does for a human user. Medium cannot distinguish this from a person typing quickly. The persistent context means the session survives across runs until Medium forces a re-login, at which point the fix is one manual login session rather than a debugging investigation.

Locator selectors need periodic maintenance when Medium deploys frontend changes. This is the known cost of medium api alternative automation through browser control rather than HTTP — the same tradeoff that applies to any UI automation against a target you do not own. It is manageable in a way that session replay and cookie expiry were not. A broken selector is a one-hour fix. A server-side content gate you cannot replicate is a dead end.

The Production Setup

With the Playwright script stable, the integration into n8n required choosing how n8n triggers it. The Execute Command node works for one-off runs but is not the right structure for a pipeline that runs daily with persistent state requirements. We built echocast-server, a lightweight Express server managed by PM2 that receives POST requests from n8n’s HTTP Request node, triggers the appropriate Playwright script, and returns a structured response. Both n8n and echocast-server run as PM2 peers on the same machine, both surviving restarts without manual intervention.

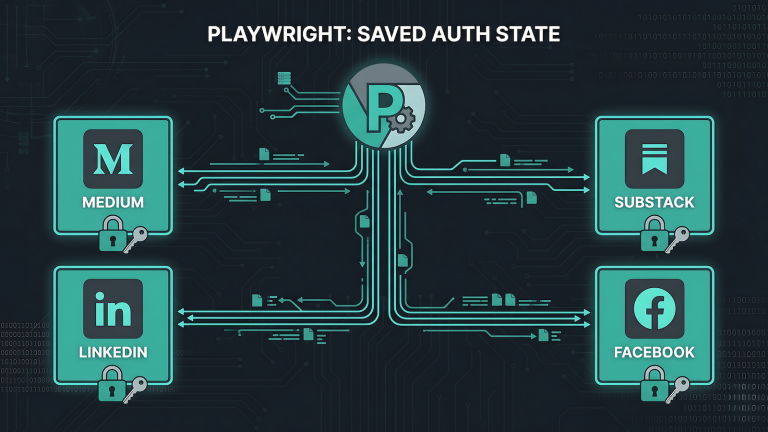

n8n passes the post title, body content, and canonical URL as a JSON payload. The Playwright script handles navigation, content entry, and submission. The response tells n8n whether the run succeeded, and n8n routes accordingly. The Medium Playwright script uses a persistent auth context stored at auth/medium, the same session management pattern applied to every other platform in the EchoCast stack.

How echocast-server is structured and why the Express approach was chosen over Execute Command is in Triggering Playwright from n8n. The persistent session management that keeps the auth context alive across runs is in Persistent Browser Sessions for Content Automation. The full syndication architecture is in n8n + Playwright Content Syndication.

What This Costs

The HAR analysis, the mutation hunting, the logLockId matching, the cf_clearance chase that investigation was not wasted work. It produced a precise diagnosis: the content scraper is gated server-side and cannot be triggered through HTTP requests alone. That diagnosis is what justified the Playwright investment with confidence. Without it, moving to browser automation might have felt like overkill for what looked like an API problem. With it, Playwright was clearly the only path.

The maintenance cost of UI automation is real but bounded. A broken selector is a fixable problem with a clear resolution path. A server-side gate that cannot be replicated is not fixable from the outside.