Everyone talks about vibe coding. Nobody talks about what happens after the code ships. Vibe coding is the practice of prompting an AI to write software with minimal formal specification. It works well enough for prototypes and solo projects. As a sustainable development practice on a revenue-critical product, it needs something around it. It needs a team. The AI human dev team workflow is what that team actually looks like in operation, and the gap between the public conversation about it and the operational reality is significant.

This is not a prediction about where software development is going. It is a description of where it already is, on a real SaaS product in active staging, with real tickets, real turnaround times, and real consequences when something breaks. The team is small. The roles are defined. And the thing that makes it work is not the AI. It is the coordination layer the humans build around it.

The Problem with “Vibe Coding” as a Model

Vibe coding positions the developer as a prompt engineer and the AI as the implementer. That framing is accurate as far as it goes. Where it falls short is in treating the output as done when the AI says it is. AI-generated code passes tests and breaks production more often than the solo vibe coder expects, because the AI tests what it built within the scope it was given. It does not test what the user will experience. It does not catch mutations introduced by a fix to an adjacent surface. It does not notice that two visually identical UI states mean completely different things to the person looking at the screen.

Vibe coding solo is a prototyping model. Vibe team software engineering is a production model. The difference is coordination, defined roles, and a human quality layer that the solo model skips entirely.

The Team

Four roles. Two humans, one AI agent, and one human who functions as the connective tissue between the other three.

The Founder holds product vision and acts as PM. This person decides what gets built, writes or directs the specs, sets priority, and owns the relationship with the product. In a traditional team this role interfaces with a dev team through layers of process. Here, they interface directly with the AI dev agent through tickets and prompts. The quality of their direction determines the quality of what gets built. This is not a passive role. The founder who thinks they can hand vague requirements to an AI agent and get a working product is going to spend a lot of time managing rework.

The AI Dev Agent handles implementation. It reads the ticket, writes the fix, commits, and closes the loop with a root cause comment explaining what it changed and why. It does not attend standups. It does not push back on scope. It does not have bad days. What it does is execute, accurately and consistently, within the scope of what it has been asked to do. It is not a collaborator in any meaningful sense. It is a very reliable executor, and that distinction matters when you are scoping tickets and managing the workflow. Understanding what agentic AI actually is and what its three operating types look like helps set the right expectations before you build a team around one.

The QA Engineer is the human verification layer. Every fix the AI ships gets tested by a human before it is called done. This is not optional overhead. This is the quality gate that keeps the product from accumulating silent regressions. The QA engineer tests against acceptance criteria, checks adjacent surfaces for mutations, and files new tickets when something breaks that was not in scope. The AI tests what it built. The QA engineer tests what the user will experience. That gap is where the role lives, and in an AI human dev team workflow it is a significantly expanded role, covering manual judgment, automation thinking, security surface checks, and light Scrum-adjacent loop management in a single engagement.

The QA Collaborator handles the organizational layer: documentation, ticket drafting, session management, and the paper trail that keeps the workflow coherent across sessions. This role is easy to underestimate. In an AI human dev team workflow, context does not transfer automatically between sessions the way it does in a human team where people carry institutional knowledge. The QA collaborator is what makes that context explicit and persistent. Without this role, the team loses continuity fast.

The Workflow in Practice

The loop is simple: ticket filed, AI agent picks it up, fix shipped, QA verifies, comment posted, ticket closed. On a good day, that loop closes in the same session. A bug caught in the morning is verified and closed by afternoon. That kind of turnaround is not achievable with a traditional dev team where a bug report might sit in backlog for a week before a developer touches it.

How developers actually use AI in real workflows tends to look a lot messier than this on solo projects. The AI human dev team workflow is cleaner because the roles are defined and the loop has a human gate at every verification step. The founder is not also trying to test. The QA engineer is not also trying to write code. Everyone is in their lane, and the AI is doing the implementation work at a pace that keeps the rest of the loop honest.

The AI dev agent’s output quality is consistently high within its scope. It understands its own codebase. Its root cause analysis in ticket comments is accurate. Its fixes are clean and follow the patterns it established earlier in the build. When the ticket is well-scoped and the reproduction path is clear, the fix is right on the first attempt. Most tickets close on the first verification pass. That is the metric that matters: first-pass verification rate tells you whether the ticket quality and the agent’s fix quality are both where they need to be.

Where the Seams Show

The workflow is fast. It is not frictionless. The seams are real, and being clear about them matters more than overselling the model.

AI tooling access gaps create manual steps that slow the loop. The agent cannot always interact directly with project management tools. It cannot move its own tickets, update status fields, or trigger the next step in the pipeline without a human doing it. These handoffs are small individually and add up across a day of active work. They are the kind of friction that traditional dev tooling solves natively and that AI-integrated workflows have not fully closed yet. Fragmentation across AI systems is a real operational cost, and the AI human dev team workflow is not immune to it.

Speed creates mutations. The faster code ships, the higher the chance that a fix to one surface breaks something adjacent. The agent does not run a full regression pass before committing. It fixes what it was asked to fix and ships. The human QA layer catches these, but catching them requires testing beyond the stated scope of the ticket, which means the QA role in this workflow is broader than it sounds on paper.

Context gaps between sessions require documentation discipline. The agent does not remember what was tested last week. The product owner does not always remember which surfaces are fragile. Without explicit documentation of what has been verified, flagged, and deferred, the team loses institutional knowledge fast. The QA collaborator role exists specifically to prevent this. It is not glamorous work. It is what makes the rest of the workflow function.

Not all AI dev agents are properly scoped by default. Left with ambiguous tickets or broad mandates, some will drift: making decisions that were not asked for, refactoring things that were not in scope, or producing fixes that technically pass acceptance criteria but break the spirit of the requirement. This is not a failure of AI development as a concept. It is a scoping problem. Tight tickets, explicit scope boundaries, and a human verification layer that checks for intent drift rather than just acceptance criteria are what keep it under control. Why AI is giving you more work instead of less often traces back to exactly this: loose briefs generating work that needs rework.

The Economics

This is a bootstrapped setup. The budget is lean. There are no salaries for the AI workers. The cost is compute and tooling subscriptions. The human roles are focused: one QA, one PM, one organizational layer, one AI doing the implementation work of what would traditionally require a dev team of multiple people.

The model works because the human roles are high-leverage. The founder’s time goes into product decisions, not implementation. The QA engineer’s time goes into verification and finding what the agent misses, not writing code. The QA collaborator’s time goes into keeping the context machine running. None of these roles are doing work the AI could do. They are doing the work the AI cannot do: judgment, verification, institutional memory.

The economics shift is not incremental. It is structural. A bootstrapped founder who previously could not afford a developer can now direct an AI dev agent at a fraction of the cost of a full-time hire. The constraint that was once headcount is now tooling access and the quality of the human coordination layer. Teams that invest in that coordination layer run faster and ship cleaner. Teams that skip it accumulate technical debt and rework at the speed of AI output.

What This Means for the Industry

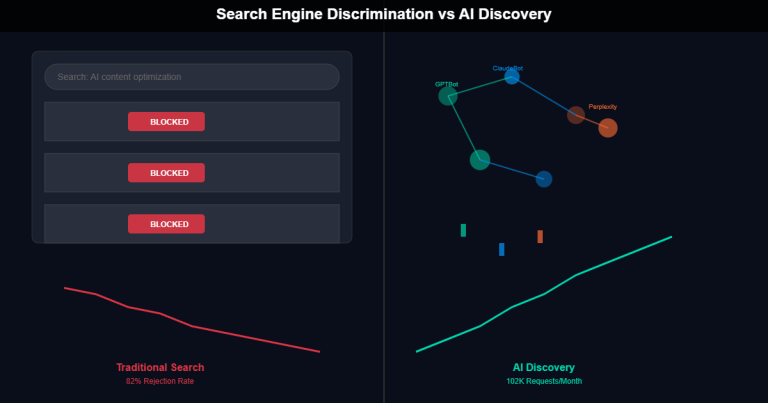

This is not a future state. It is an operational reality on a product in active staging right now. The conversation about AI replacing software developers is happening at the wrong level of abstraction. The more useful question is which roles in a software team survive when AI handles implementation.

The answer, from inside a functioning AI human dev team workflow, is the roles that require what AI cannot replicate: judgment, context, relationship, and verification. The PM who understands the product well enough to direct an AI agent accurately. The QA engineer who knows what the user will experience and can catch the failures that never made it into a ticket. The person who holds the institutional knowledge that keeps the team coherent across sessions. Whether AI will actually replace jobs depends heavily on which part of the job you are looking at. Execution is at risk. Judgment is not.

What does not survive is implementation work that can be specified clearly enough for an AI to execute. That is not a small category. But it is not the whole job, and the roles built around judgment and verification are not at risk. They are increasingly the constraint on how fast AI-assisted teams can actually move.

Takeaway

Vibe coding is solo. You and a prompt and a codebase that may or may not hold together under pressure. Vibe team software engineering is coordinated. It has defined roles, a documented workflow, a quality gate, and a human layer that holds the context the AI cannot carry.

The AI human dev team workflow works. It is not perfect. The seams are real, the friction points are real, and the documentation discipline required to keep it coherent is not nothing. But it ships, it closes bugs fast, and it does it at a cost structure that was not viable two years ago. If you are building a product and trying to figure out how to run lean without sacrificing quality, this is the model worth understanding. Know your role in the loop. Keep the human in the loop at every verification step. That is what makes it work.