The phrase “engineered AI” keeps showing up in searches, in developer threads, and in GSC impressions for sites covering AI tools and workflows. It’s not an official taxonomy yet. No standards body coined it, no single conference talk launched it. But it’s forming as a real concept, and it’s forming fast, because the industry finally needs a word for the thing that isn’t vibe coding, isn’t pure generative AI, and isn’t the breathless agentic future that vendor decks keep promising. What is engineered AI, exactly? That’s worth pinning down before the term gets diluted by everyone who wants to slap a serious-sounding label on their prompt workflow.

What Engineered AI Is Not

Before the definition, clear the field. Generative AI refers specifically to models that produce outputs: text, images, code, audio. It’s a technical category describing a class of AI capability, not a methodology. Agentic AI refers to systems where models take autonomous, multi-step actions with minimal human intervention, chaining tools, decisions, and outputs across a workflow. Both are real terms with real technical meaning, and engineered AI is not a replacement for either of them.

Vibe coding sits at the other end of the spectrum, and it’s useful as contrast. The concept describes a development style where you prompt an AI with high-level intent, accept what it produces, and don’t look too hard at what’s underneath. It works for prototypes. It collapses when AI-generated code passes tests but breaks production. The backlash to that failure mode is where the demand for “engineered AI” starts making sense, because practitioners needed a phrase for the opposite: deliberate, structured, tested, and built to hold up.

The Working Definition

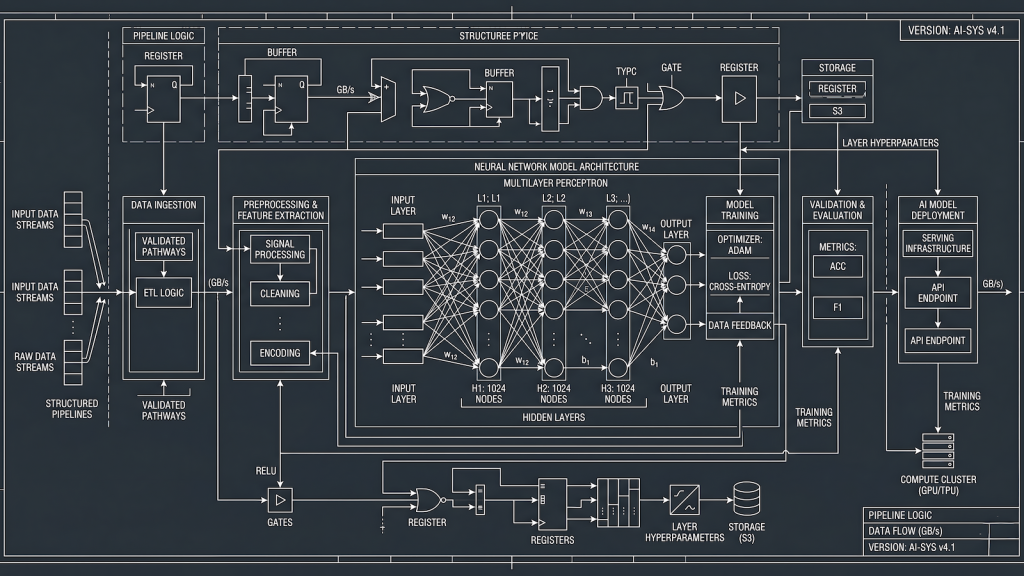

Engineered AI is the practice of integrating AI into systems, workflows, and pipelines with the same rigor you would apply to any other engineering problem. That means defined inputs and outputs. That means failure modes mapped before deployment. That means the AI component is treated as a component, with dependencies, constraints, validation requirements, and a clear role in the larger system, not as a magic box you call and hope for the best.

The distinction isn’t about which model you use or whether you write prompts. It’s about whether the AI integration is designed or just bolted on. An engineered AI system has observable behavior. You can test it. You can catch when it drifts. You can explain to someone else how it works and why. A non-engineered AI integration is one where the whole thing runs on vibes and tribal knowledge, where nobody knows what happens when the model updates, and where “it usually works” passes for documentation. That’s not a workflow. That’s a liability.

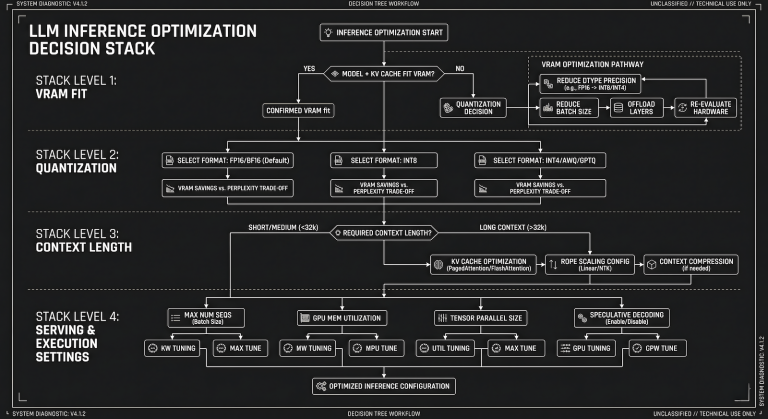

What is engineered AI in practice? It’s the person who writes test coverage for their AI outputs, not just their code. It’s the workflow where Ollama runs locally, the prompt is version-controlled, and the output gets validated against a schema before it touches anything downstream. It’s context engineering: the deliberate construction of everything the model needs to produce reliable output, treated as a first-class artifact rather than an afterthought. The QA engineer who treats an LLM as a black-box system under test, not a teammate, is already practicing engineered AI whether they call it that or not.

Why the Distinction Matters Right Now

The window between “everyone is adopting AI” and “everyone is dealing with the consequences of bad AI adoption” is closing. Teams that shipped vibe-coded integrations in the past year are now sitting on production systems they can’t explain, can’t debug reliably, and can’t safely modify. The AI hype cycle has a predictable second act, and we’re entering it. People are searching for “engineered AI” because they’re looking for a framework that separates practitioners from prompt-spammers.

This also changes how tools get evaluated. The questions that matter for an engineered AI context are different from what marketing decks answer. Does this tool expose its behavior clearly enough to test? Does it fail gracefully or silently? Can you constrain its outputs to a format your system actually handles? Those aren’t demo questions. They’re the same questions a QA engineer asks about any system component, which is why balancing AI-driven testing with manual rigor isn’t just a QA problem anymore. It’s the template for every team integrating AI into production.

The term is also converging from multiple directions simultaneously, which is how technical vocabulary solidifies. The energy sector has been using “engineered AI” to describe physics-driven, deliberately constrained models tuned for specific real-world problems. Academic frameworks are building it out as a discipline. Agentic AI is maturing to the point where the question isn’t whether to use it but how to build it so it doesn’t go sideways. Engineered AI is the answer to that question.

What Engineered AI Looks Like in Practice

A few concrete examples make the definition stick. A content syndication pipeline using n8n automation where every step has a log, a retry condition, and a defined failure path: that’s engineered AI infrastructure. A local LLM stack where model selection is based on documented requirements, prompts are tested against known edge cases, and outputs are validated before they reach any downstream system: that’s engineered AI in a personal workflow. An agentic AI workflow where the agent’s scope is bounded, its outputs are checkpointed, and a human reviews before anything irreversible happens: that’s engineered AI applied to automation.

The common thread is intentionality at the system level. The AI does a job. The job has a spec. The spec gets validated. The whole thing is observable and reproducible. That’s the practice, regardless of what model is running underneath or what vendor built the tooling.

Where This Site Fits

EngineeredAI.net has been operating from this position since launch, before the term started circulating in GSC data and developer threads. Every tool review leads with limitations. Every workflow breakdown documents what breaks, not just what works. The site exists because “it usually works” is not a system. The term “engineered AI” finally has enough gravity to anchor that positioning explicitly, and this is the site that defines it in practice, not in theory. If you’re here because you searched for what engineered AI means, you’re already thinking the right way about it.