The AI-replaces-jobs debate is mostly conducted by people who haven’t actually tried to replace parts of their own work with AI and measured the results. The honest answer isn’t a prediction about the economy, it’s a documented account of what specific tasks transferred to AI tools and what didn’t hold up under real working conditions. Here’s what actually got replaced in a content production and QA automation workflow, and where the limits were concrete enough to measure.

This isn’t about whether AI is impressive or whether it has potential. It’s about the gap between the demo and the daily workflow, which is wider than most coverage acknowledges. The tools that made it into permanent rotation are the ones that reduced time or friction on a specific task without introducing new overhead to manage the AI’s output. Knowing what AI actually does in a real workflow is more useful than knowing what it can theoretically do.

What Transferred Successfully

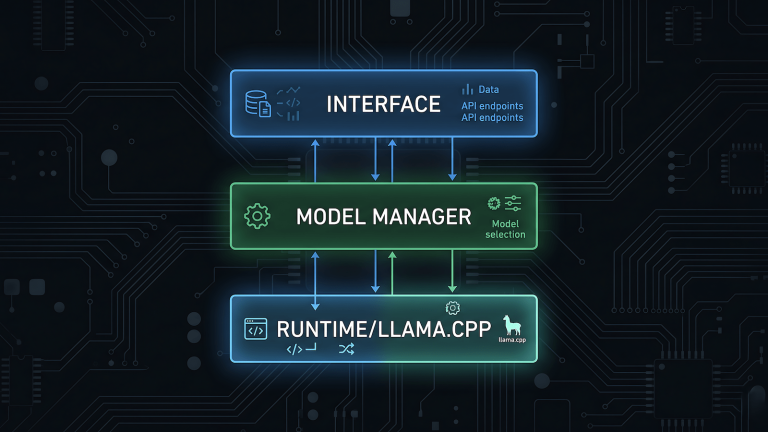

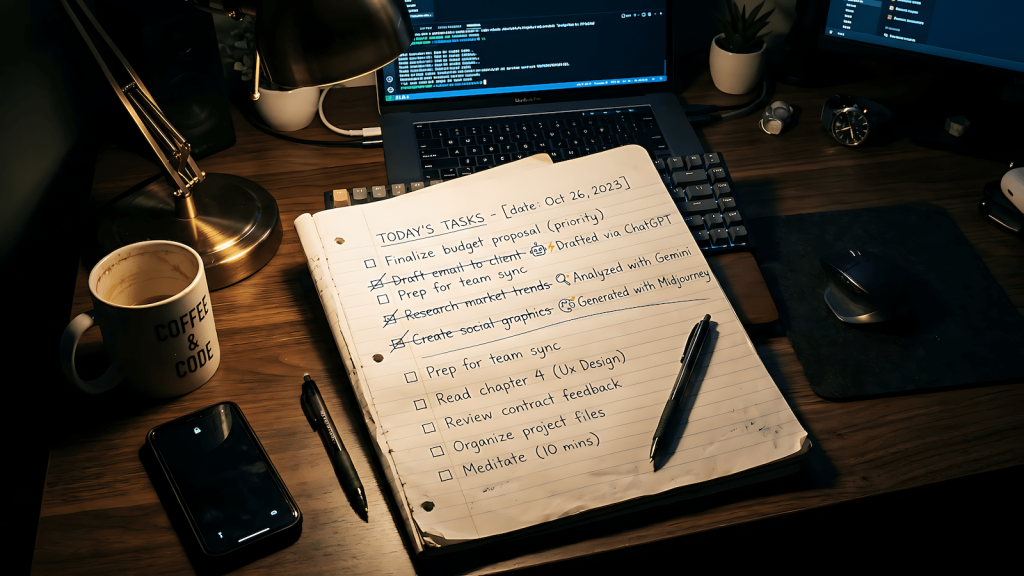

First-draft generation for structured content is the clearest win. Blog post drafts, documentation outlines, email templates, and structured reports all transfer well to a local LLM workflow. The output requires editing always but the editing time is shorter than writing from scratch, and the structure is usually sound enough to work with. This held up across a multi-site content network running n8n workflows with Ollama for draft generation.

Boilerplate code generation is another genuine transfer. Test scaffolding, repetitive automation script components, configuration files, and standard CRUD operations all generate acceptably on first attempt. The code usually works but doesn’t account for edge cases or project-specific conventions without explicit prompting. The time saved on the boilerplate frees up time for the parts that require actual engineering judgment.

Research synthesis from a defined source set works when you control the inputs. Passing a set of documents to a local model and asking it to extract, compare, or summarize specific information is reliable for factual extraction tasks. It breaks down for interpretive tasks that require understanding context the model doesn’t have.

What Didn’t Transfer

Judgment calls didn’t transfer. Deciding whether a test case is worth writing, whether a piece of content serves its audience, whether an architecture decision is sound these require context about the specific project and accumulated experience that a model doesn’t have and can’t simulate convincingly. The attempts at using AI for these decisions produced outputs that sounded reasonable but were consistently wrong in ways that required domain knowledge to catch.

Debugging non-trivial code didn’t transfer reliably. AI code suggestions for simple, isolated bugs are often useful. For bugs that emerge from system interactions, timing dependencies, or state management problems in complex codebases, the suggestions are frequently plausible-looking non-solutions. The model doesn’t understand the system; it pattern-matches against code that looks similar to what it’s seen. That’s useful for simple cases and not useful for hard ones.

Anything requiring current information failed predictably. A model with a training cutoff doesn’t know about library updates, API changes, new tool releases, or recent research. Every workflow that touched current information required verification against primary sources, which eliminated most of the time savings.

The Honest Pattern

AI replaced the mechanical parts of work, the parts that required time and attention but not judgment. It didn’t replace the parts that required understanding what good looks like in a specific context, reasoning about failure modes, or making decisions that depended on knowing how a specific system behaves. That’s a useful boundary to understand before building workflows around AI assistance, because crossing it produces confident-looking wrong answers rather than obviously incomplete ones.