The AI tools market is producing a category of product that didn’t exist five years ago: the API wrapper dressed as a platform. Take a foundation model API, add a branded interface, write marketing copy about proprietary AI, and charge a subscription. The underlying capability is unchanged from what you could access directly, and the added value is marginal at best. Knowing how to tell whether an AI tool is real engineering or a wrapper is a practical skill for anyone making purchasing decisions or evaluating tools for a workflow.

This isn’t a complaint about wrappers as a business model. Wrapping an API and making it accessible is a legitimate product decision. The problem is when wrapper products claim capabilities they don’t have, charge prices that assume proprietary technology, or lock your data into a system that adds no defensible value over the underlying API. Evaluating AI tools honestly means applying a consistent technical filter before accepting marketing claims at face value.

The Technical Tells

Output quality that matches a known model is the first signal. If a tool’s output is indistinguishable from GPT-4o or Claude Sonnet in quality, style, and failure modes, the tool is likely calling one of those APIs. Run the same prompt through the tool and through the underlying model you suspect it’s using. Matching output patterns, identical hallucination types, and the same context window limitations are strong indicators that the “proprietary AI” is just a named API call.

Latency patterns reveal infrastructure decisions. A tool with response latency that matches OpenAI’s API response times is routing through OpenAI. A tool with consistently different latency, faster on simple queries, slower on complex ones might be running its own inference infrastructure, though it could also be using a different provider. Latency alone isn’t conclusive but it’s part of the pattern.

Data handling vagueness is a red flag. Real AI infrastructure companies can tell you exactly where inference happens, whether your prompts are used for training, and what their data retention policy is. Wrapper products often have vague terms because the answer is “your data goes to our API provider and we inherit their terms.” If a vendor can’t tell you specifically where your data is processed, that’s not an oversight.

What Real Engineering Looks Like

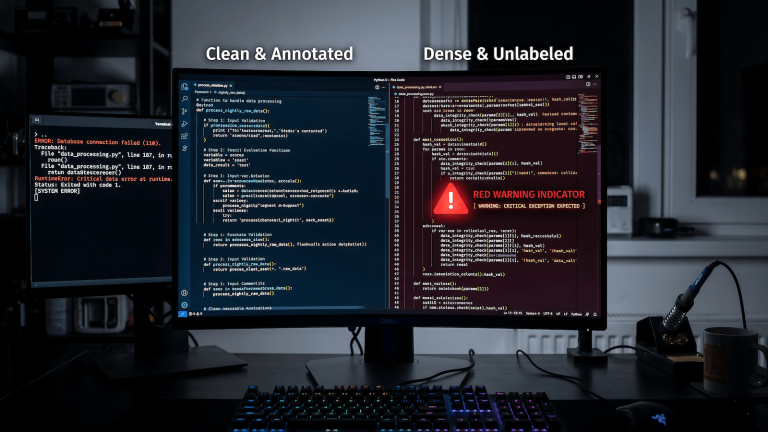

Fine-tuned models with documented training data and benchmark results on relevant tasks are evidence of actual AI development work. The fine-tuning doesn’t need to be massive, a domain-specific LoRA adapter on a base model represents real engineering but there should be something to show beyond a system prompt.

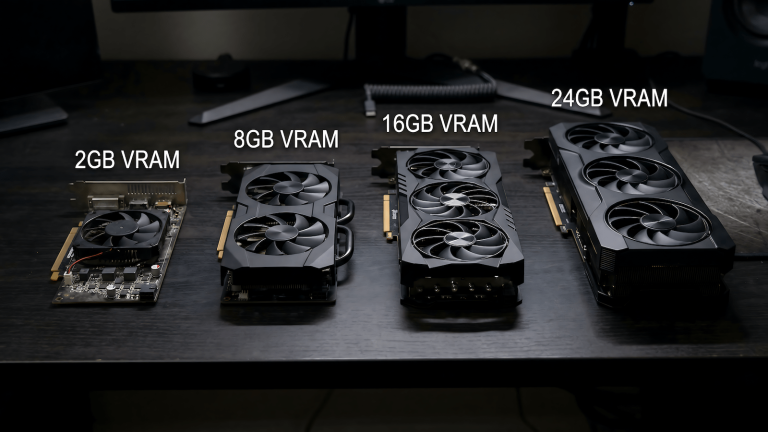

Custom inference infrastructure with published performance benchmarks is another real signal. Running your own model serving rather than routing to a third-party API requires engineering investment. Companies doing this can tell you about their infrastructure, their latency guarantees, and their capacity planning. Running local inference yourself gives you a reference point for what that infrastructure work actually involves.

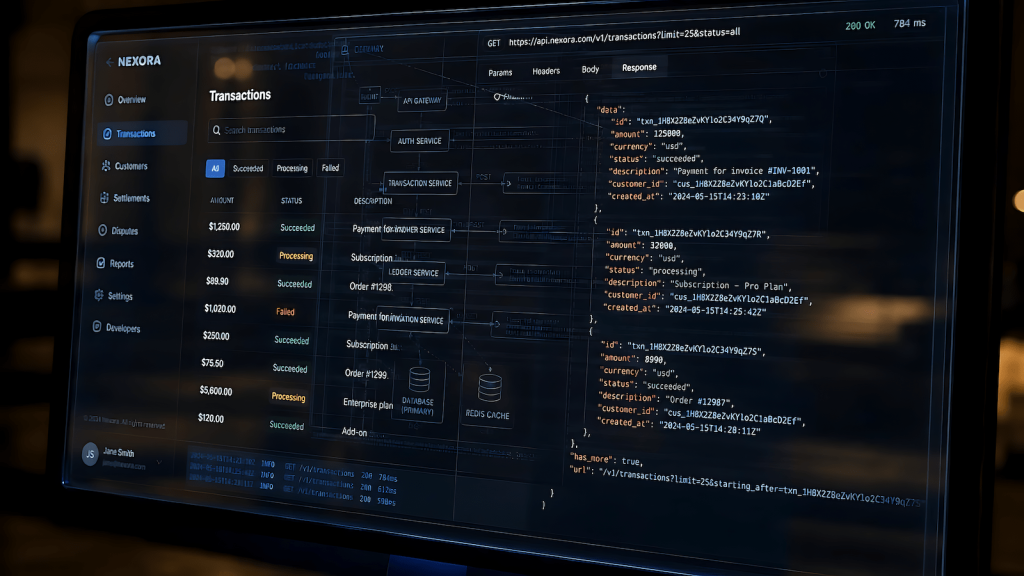

Proprietary data pipelines, retrieval systems, RAG implementations, knowledge bases built from domain-specific content, add genuine value that isn’t available from a raw API call. If a tool’s value proposition includes “AI trained on your industry’s data” and they can show you what that means technically, that’s a different product from one that’s just routing to the same model you could call directly.

The Practical Filter

Before evaluating any AI tool: identify which foundation model it’s likely using, test whether direct API access to that model produces equivalent results, and calculate the cost difference between the tool and direct API access at your usage volume. If the tool costs meaningfully more than direct API access and the output quality is equivalent, the question is whether the interface and workflow convenience justify the premium. Sometimes it does. But make that choice with accurate information about what you’re actually paying for.

The AI tools worth your time are the ones where the added layer contributes something real to your workflow. Everything else is a subscription fee for a UI.