The term “prompt engineering” has accumulated enough hype that it’s become nearly meaningless. What it actually describes when stripped of the buzzword layer is writing instructions for a system that will be called with inputs you can’t fully anticipate. That’s a specification problem, and it has the same failure modes as every other specification problem: ambiguity, incompleteness, and assumptions that hold until they don’t.

A prompt contract is a prompt written with the rigor of a specification. It defines what the model should do, what it should not do, how it should handle ambiguous inputs, and what it should return when an input falls outside its intended scope. Writing prompt contracts that survive edge cases is the difference between a pipeline that works reliably in production and one that requires constant babysitting. This connects directly to structuring AI pipelines for production, the prompt is the input contract for the model itself.

Why Prompts Break at the Edges

A prompt that works on your test set is a prompt that works on the inputs you thought of. Edge cases are inputs you didn’t think of, and every production system eventually encounters them. A prompt that says “summarize this article in three bullet points” works until someone passes it a legal document, a JSON blob, a poem, or nothing at all. The prompt has an implicit assumption about what “article” means that breaks when the assumption is violated.

The failure mode isn’t usually a hard error. It’s a silently wrong output that looks plausible. A model asked to extract product names from a review will often extract something when there are no product names present rather than returning an empty result. That’s the model trying to be helpful against an instruction set that didn’t tell it what to do when its task isn’t possible. Knowing where AI tools actually fail rather than where they look impressive is prerequisite knowledge for writing prompts that hold up.

The Elements of a Prompt Contract

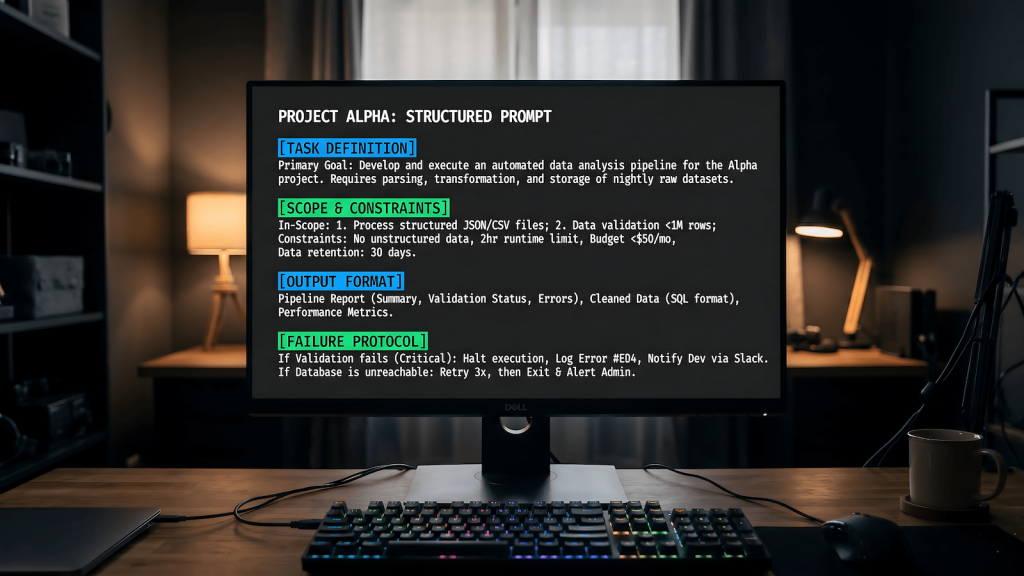

A prompt contract has six components. Task definition specifies what the model should do in concrete, unambiguous terms. Scope constraints define what inputs are in scope and what should happen with out-of-scope inputs. Output format specifies the exact structure of the expected response, including what an empty or negative result looks like. Edge case handling covers the specific boundary conditions you can anticipate. Failure protocol tells the model what to return when it can’t complete the task rather than letting it improvise. And invariants are the things that must always be true about the output regardless of input.

Most prompts include task definition and implicitly assume output format. They skip scope constraints, edge case handling, failure protocol, and invariants entirely. That’s why they break.

Writing Scope Constraints

Scope constraints prevent the model from attempting tasks outside its defined purpose and more importantly tell it explicitly what to do when it encounters an out-of-scope input. A prompt for extracting dates from documents needs a scope constraint that says what to return when no dates are present. Without it, the model will either hallucinate a date, return a generic statement that no dates were found, or format a failure response differently every time.

Explicit scope constraints look like: “If the input does not contain a clearly identifiable date, return exactly: {dates: []}. Do not infer, approximate, or extract date-like strings that are not explicit dates.” That’s a scope constraint with a failure protocol embedded in it. It handles the edge case before the model encounters it rather than hoping the model makes a good decision on its own.

Testing Prompt Contracts

A prompt contract isn’t complete until it’s been tested against a boundary condition set. Build a test set that includes: inputs at the exact edges of the defined scope, inputs that are clearly out of scope, inputs that are ambiguous about whether they’re in scope, malformed inputs, empty inputs, and inputs designed to trigger the most common model failure modes (hallucination, format deviation, instruction following failures).

Run every test case and verify the output against the contract definition. Output that doesn’t match the contract is a contract failure, not an acceptable variation. The n8n Ollama pipeline approach to automated content generation lives or dies on prompt contracts that handle edge cases reliably when the pipeline runs unattended, there’s nobody to catch a silently wrong output.

Versioning and Maintenance

Prompt contracts change when the model changes, when the input distribution changes, or when the task requirements change. Treating prompts as static artifacts rather than versioned specifications is what turns a working pipeline into an unreliable one over time. Store prompt contracts in version control alongside your code, log which prompt version was used for each model call, and test the contract against your boundary condition set whenever a model update is deployed.

A prompt contract is a specification. Write it like one, test it like one, and maintain it like one. The model is just a system that executes the specification and like all specifications, the quality of the output is bounded by the quality of the instructions.