Most people are still arguing about how to phrase their prompts. Word choice. Tone. Whether to say “act as” or “you are.” That’s not where the leverage is. It never was.

The actual variable that determines output quality is what you put in the context window before the model ever reads your question. That’s context engineering. And once you understand the difference, you’ll stop tweaking prompts and start designing inputs.

What Prompt Engineering Actually Is (And Why It’s Not Enough)

Prompt engineering, the way most people practice it, is surface-level. You write a better question and hope the model responds better. Sometimes it does. More often you’re just adjusting the phrasing of an underspecified request and getting a slightly different version of a generic answer.

The problem isn’t the prompt. The problem is that the model has no idea who you are, what you’re building, what constraints you’re working within, or what “good” looks like for your specific situation. You’re asking for a specific answer without giving specific context. The model fills the gaps with averages. You get average output.

Context engineering fixes this at the source.

What Context Engineering Actually Means

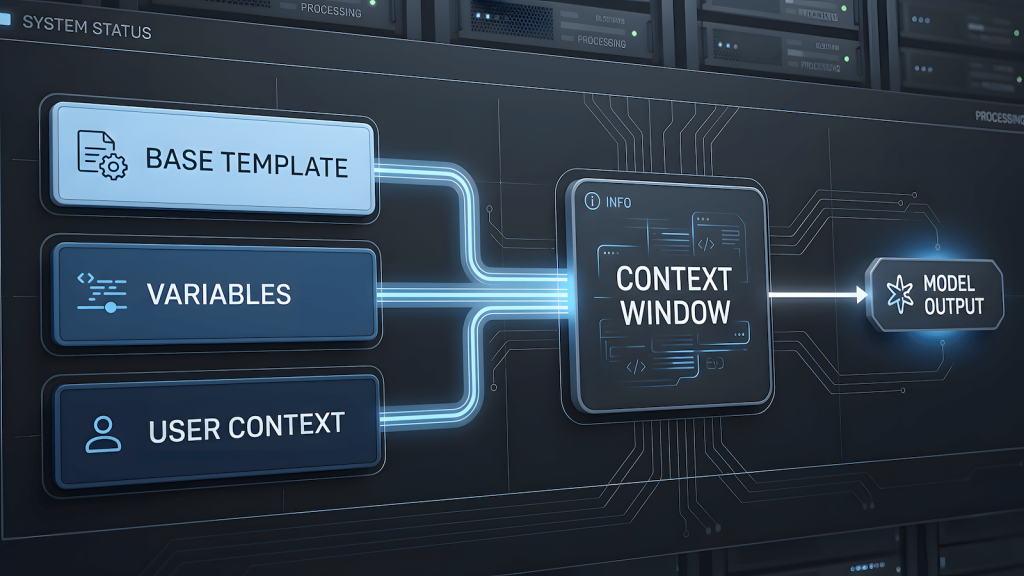

Context engineering is the deliberate design of everything that enters the model’s context window before it generates a response. That includes the system prompt, the role you assign, the constraints you set, the examples you provide, and the user-supplied variables that make the request specific to this situation and not some generic version of it.

It is not about writing longer prompts. It is about writing smarter inputs that give the model the right information in the right structure so it reasons correctly without guessing.

Three layers work together:

The base template is your structural backbone. It defines the role, the expected output format, the constraints, and the quality bar. This is where your domain expertise lives. It does not change per request. It is engineered once and trusted every time.

The variables are the selectable parameters. Test type, framework, target layer, scope. These are the dimensions that differentiate one use case from another. They slot into the base template at defined positions and narrow the output from general to specific.

The user context is the runtime input. A one-line description of the actual feature, system, or scenario being tested. This is what makes the output specific to this situation and not just a well-formatted generic response.

The model’s job is to reason from all three and produce something that fits the intersection. That intersection is where generic outputs stop and useful outputs begin.

Why This Works Better Than Prompt Tweaking

When you engineer context correctly, you are not hoping the model interprets your request generously. You are giving it everything it needs to interpret it correctly the first time.

Consider the difference between these two inputs:

Input A: “Write a regression test prompt for a login feature.”

Input B: System prompt defining the tester role and output format. Variable inputs: regression test, mobile app, biometric plus OTP fallback. User context: “Login flow uses Face ID as primary and falls back to 6-digit OTP if biometric fails three times.”

Input A gets a template. Input B gets a prompt that actually covers the biometric failure threshold, the OTP fallback trigger, the session behavior after failed attempts, and the edge cases specific to that flow. Not because the model is smarter. Because the context was smarter.

The model did not search for that information. It reasoned from what you gave it. That distinction matters because it means the quality ceiling is set by how well you design the context, not by how capable the model is on its own.

Where the Moat Actually Lives

This is the part nobody talks about when they sell you on prompt engineering courses.

The prompts themselves are not defensible. Anyone can read your prompts and copy them. What is defensible is the base template, specifically the one built from real operational experience in a specific domain. The base template for QA regression testing written by someone who has actually rebuilt regression suites under sprint pressure is fundamentally different from one written by someone who read about regression testing. The model cannot tell the difference. The output can.

This is why context engineering rewards domain experts. The base template is where your knowledge gets encoded. The variables and user context just activate it for a specific situation. If your base template is shallow, no amount of user context makes the output deep. If your base template carries real operational knowledge, even a minimal user input produces something specific and usable.

What I’m Building With This

I’m applying this architecture to a QA prompt builder over at QAJourney. The base templates are written from actual QA practice across manual, automation, regression, API, E2E, smoke, and security testing. The user selects their test type, framework, and scope. They add one line of feature context. The model assembles a ready-to-use prompt that fits their exact situation, not a generic version of it.

That’s context engineering in production. Not a theory. A tool.

If you do QA and want to see how this plays out in practice, the builder will be live at QAJourney.net. I’ll link it here when it ships.

The Takeaway

Stop optimizing your prompts. Start engineering your context.

Define the role precisely. Lock the output format. Set the constraints once in the base template. Let variables and user input handle the specifics. Give the model what it needs to reason correctly and it will. Give it a better-phrased question with no context and it will keep averaging.

Context engineering is not a technique. It is the mental model that makes everything else work.