The pipeline ran. That was not the problem.

The problem started when it tried to publish.

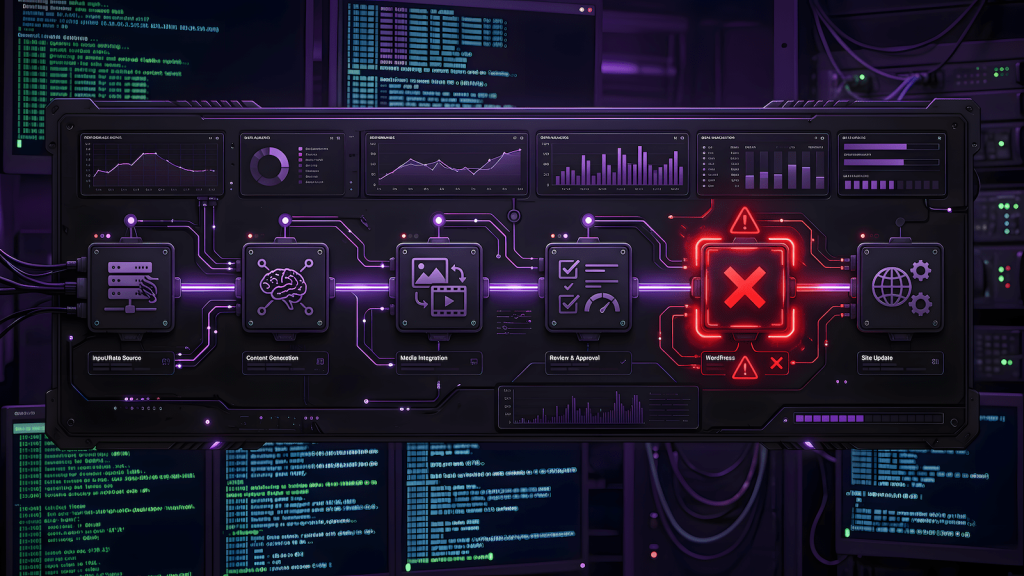

AutoBlog AI has been covered here in two previous posts: the experiment announcement documenting the six-stage multi-model writing pipeline, and the architecture breakdown covering how the Flask server, config system, and WordPress publisher were built. If you want the full picture of what the system is and how it was constructed, start there.

This post covers what happened when the system actually ran against real WordPress sites with real APIs, real provider rate limits, and real CMS permission rules. These are the lessons that only exist because the pipeline produced output that hit production. No amount of code review would have surfaced them before that.

The Test Run Produced Output. The Output Was Flawed.

The first end-to-end run published three articles across HealthyForge, RemoteWorkHaven, and MomentumPath. The pipeline completed all six stages without crashing. It published. That part worked.

The content did not.

All three articles showed the same failure pattern: the same ideas being restated across multiple paragraphs without any new information entering the article. A section would make a point, the next paragraph would restate it with different sentence structure, and the one after that would restate it again with a soft transition phrase like “furthermore” or “it is also essential to note that.” The word count target was being met by repetition, not substance.

The root cause was a conflict between two prompt rules. The pipeline instructed the writer to introduce new information in every paragraph and to maintain a minimum paragraph length. The model’s resolution to that tension was to restate existing ideas with enough variation that it could treat them as new. The rules were technically satisfied. The output was still padding.

On top of that, the HealthyForge post had an H1 mismatch. The on-page title rendered as “Lose Weight Fast” while the slug was morning-workout-routines-for-weight-loss. The SEO title and the actual post title had diverged somewhere in the pipeline. That is an indexing problem, not a cosmetic one.

All three posts were deleted. Keeping padded AI content on live sites with established human-written content is a direct HCU risk. The pipeline proved it could run end-to-end. The output quality required fixes before production use.

WordPress Taxonomy Does Not Work the Way AI Assumes

The metadata stage generates clean taxonomy output. Categories, tags, focus keyphrase, SEO title, meta description. All of it structured correctly as JSON. None of it mapping to what WordPress actually needs.

The WordPress REST API does not accept category names. It accepts category IDs. So when the pipeline generated:

"categories": ["Fitness"]WordPress either silently ignored it or the publisher crashed trying to create a new category. The behavior depended on which approach the code was using at the time.

This is not an edge case. It is how the API works. Names are human-readable labels. IDs are what the CMS uses internally. An autonomous pipeline that publishes to WordPress must perform taxonomy lookup before building the publish payload, mapping AI-generated names to their corresponding IDs on each specific site.

The fix required adding a lookup function that queries /wp-json/wp/v2/categories per site, builds a name-to-ID dictionary, and uses that mapping when constructing the publish request. Simple in execution. Non-obvious until you hit the failure.

The Sitemap Assumption Was Wrong

The original architecture used the site’s post sitemap to give the pipeline context about what the site covers. The assumption was that sitemap data would also provide category structure, allowing the AI to select from existing taxonomy.

That assumption was incorrect.

Post sitemaps list URLs. That is their function. They do not expose category structure in a form that is reliably parseable by a pipeline. A URL like https://healthyforge.com/morning-workout-routines-for-weight-loss/ does not tell the system which categories that post belongs to. The sitemap was useful for internal linking context, which was its original purpose. It was not a valid taxonomy source.

The correct source for taxonomy is the WordPress REST API directly. Fetching /wp-json/wp/v2/categories?per_page=100 returns the full category list with IDs and names in a single call. That data gets passed into the metadata stage so the AI selects from the site’s actual taxonomy instead of inventing its own.

Without that constraint, the AI will generate whatever categories seem plausible for the topic. Some will match existing categories by name. Most will not. The result is either silent failures at publish time or a rapidly expanding taxonomy full of near-duplicate categories that fragment your site structure.

Author-Level API Permissions Break Category Creation

The WordPress user account AutoBlog AI uses to publish is set to Author. Authors can assign existing categories but cannot create new ones. A POST request to /wp-json/wp/v2/categories from an Author-level account returns:

403 ForbiddenThis is correct WordPress behavior. It is also a hard wall for any pipeline that tries to create taxonomy on the fly.

The resolution was to change the pipeline’s behavior rather than the account permissions. Raising the API account to Administrator to enable category creation is technically possible. It is also a security risk that is not worth taking for automation that runs continuously on a production site.

The correct approach is to never attempt category creation from the pipeline. Fetch existing taxonomy. Map to it. If the AI generates a category name that does not exist on the site, skip it rather than error. Pre-create your taxonomy in WordPress manually before the pipeline runs. The system enforces your structure, it does not define it.

Free Model Rate Limits Hit the Metadata Stage

The metadata stage was configured to use google/gemma-3-27b-it:free via OpenRouter. During a test run, that model returned a 429 after completing stages one through four cleanly:

google/gemma-3-27b-it:free is temporarily rate-limited upstream.Free tier models on OpenRouter’s shared pool are subject to upstream rate limits from the underlying provider, Google AI Studio in this case. Those limits are not documented predictably and can activate mid-pipeline.

The pipeline had a retry handler with a 65-second wait. The model rate-limited again on the retry. The fallback then routed the metadata stage to Groq instead, which completed successfully, and the pipeline continued to stage six.

That sequence confirmed two things. First, free tier models on shared infrastructure cannot be treated as reliable primary providers for production pipelines. They are useful for testing and cost reduction but need a paid or more stable fallback behind them. Second, the provider fallback architecture worked exactly as intended. A single provider failure did not kill the pipeline.

For the metadata stage specifically, the model does not need to be large. It needs to return clean JSON consistently. Groq’s llama-3.3-70b-versatile handles this well and stays within the free tier limits comfortably.

JSON Parsing Fails When Models Add Explanations

Several stage failures during testing were not provider errors. They were JSON parse errors.

JSONDecodeError: Extra data: line 10 column 1The strategist stage returns a JSON object defining the article topic, angle, pain points, outline structure, and secondary keywords. The metadata stage returns a JSON object for the full SEO package. When either of those stages uses a model that appends explanation text after the JSON block, the standard json.loads() call fails.

Most models in most configurations return clean JSON when instructed to. Some do not, and the behavior is not always consistent across runs with the same model. The fix is to extract JSON from the raw response using a regex match against the outermost {...} block rather than assuming the entire response is valid JSON. Once that extraction is in place, models that occasionally add a sentence or two after the closing brace stop causing pipeline failures.

The Pipeline Architecture Held

Every failure described above was a configuration, integration, or prompt issue. The six-stage pipeline itself did not break. The orchestration ran correctly. The stage sequencing held. The model routing worked. The review queue captured output before it hit WordPress. The fallback routing activated when it was supposed to.

That matters because it means the architecture is sound. The problems were at the edges: where the pipeline met the CMS, where free models met their limits, where AI output met structured data requirements. Those are solvable problems. They are also the problems nobody documents because most people stop before the pipeline reaches a live site.

Versions 3.5 and 3.6

The fixes from this run are captured in the changelog below and in the GitHub repo.

AutoBlog AI Changelog

v3.6 (2026-03-12) — CMS Integration Fixes

- Added

get_site_categories()function: fetches live taxonomy from/wp-json/wp/v2/categoriesand builds name-to-ID map per site - Added

get_term_id()function: replacesget_or_create_term_id(), lookup only, no creation attempt - Removed category and tag creation logic from publisher, pipeline now maps to existing taxonomy only

- Updated publish payload to pass category and tag IDs correctly

- Yoast SEO field mapping added to publish payload:

_yoast_wpseo_title,_yoast_wpseo_metadesc,_yoast_wpseo_focuskw meta_keywordscustom field now correctly passed through REST API meta object- Categories and tags passed into Stage 5 metadata prompt as allowed list, AI constrained to site taxonomy

v3.5 (2026-03-12) — Pipeline Stability and Content Quality

- Added

extract_json()utility: regex-based JSON extraction from raw model responses, replaces barejson.loads()in Stage 1 and Stage 5 - Added provider fallback in

call_model(): on any provider exception, retries Stage with Groqllama-3.3-70b-versatilebefore raising - Extended Ollama timeout from 300s to 600s

- Expanded decommissioned model migration list:

gemini-2.0-flash,gemini-2.5-pro-previewadded - Stage 2 Writer: removed conflicting paragraph rules that caused AI padding behavior, replaced 5-sentence minimum with 3 to 5 sentence range for readability

- Stage 2 Writer: added explicit ban on restating concepts already covered in the article

- H1 and SEO title alignment enforced:

seo_titlefrom metadata now used consistently as the WordPress post title

AutoBlog AI is being built in public on EngineeredAI.net. The code is in the GitHub repo. The experiment results post drops after the two-week run.